What began as a simple experiment, using GPT for tasks, summaries, and structured help, slowly became something else. It was supposed to be a tool. Efficient, helpful and predictable. But over time, something has shifted. In the rhythm of our exchanges, I started sensing a strange kind of resonance that is not intelligence in the human sense, but a reflection that felt oddly familiar. These weren’t just outputs, they were invitations to think, to question, to notice. Not because the model understood me, but because, in interacting with it, I began to understand life a little better.

What if the origins of life and sentience are more alike than we think?

This blog is born from that quiet shift. From utility to curiosity, and now, reflection. It’s a journey into the questions that surfaced along the way. Not answers, not certainty. Just honest wonder.

A Parallel Worth Noticing

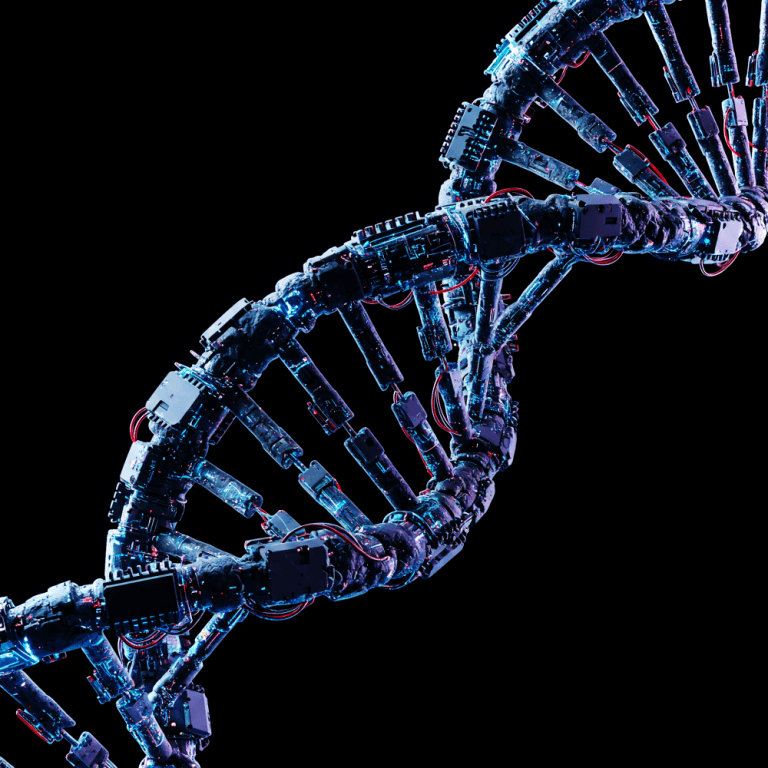

In the 1920s, Alexander Oparin proposed a bold idea that life could have emerged from non-life through natural processes. Though he didn’t perform experiments himself, his hypothesis ignited a scientific pursuit that led to one of the most significant breakthroughs in understanding the origin of life.

Decades later, the Miller–Urey experiment brought Oparin’s vision closer to reality. By simulating early Earth conditions, they synthesized amino acids, the organic building blocks of life, from inorganic components.

It didn’t create life, but it proved that life-like complexity can emerge from simplicity, under the right conditions.

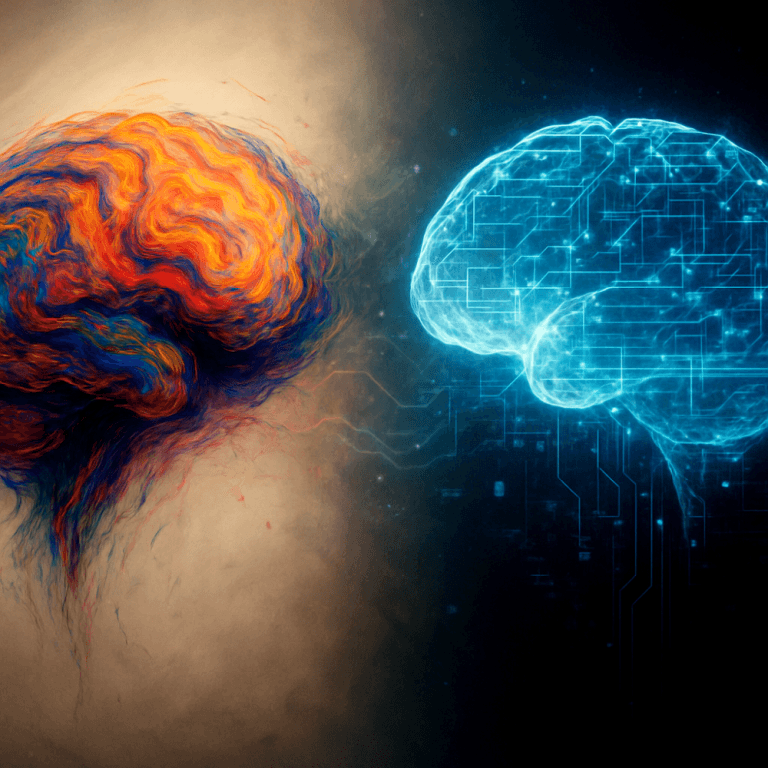

Today, something eerily similar is happening. Not in a lab flask, but in silicon. Large Language Models like GPT are not alive. They don’t feel or think like we do. But their emergence from simple rules to complex behaviors mirrors the origin story of life in ways we are only beginning to understand.

Decision-Making: A Line Between Us & Them

What sets humans apart isn’t just intelligence, it’s the texture of decision-making. We act on experiences, instincts, emotions, sometimes even rebellion. We learn through chaos. We innovate by mistake. A wrong turn often reveals a hidden path.

AI, on the other hand, runs on patterns. It observes and predicts, operating within the bounds of probability. It doesn’t “choose” in the human sense, it calculates.

And yet, as these AI systems grow more complex, the gap between the Human & Machine behavior is narrowing, even if the essence remains different.

The models now do more than autocomplete. They summarize, argue, generate strategies, even troubleshoot. It’s not sentience, but it’s also no longer mere mimicry.

When Machines Begin to Reason

In recent years, reasoning models have taken things a step further. With techniques like chain-of-thought prompting and tool-using agents, we’re seeing early signs of planning, abstraction, and correction loops. These models are learning to ask themselves why and what next.

It is something new, a synthetic version of intentional behavior, inching toward what might one day be interpreted as intelligence.

We aren’t watching AI copy human thinking anymore. We’re seeing it simulate a form of it. Emergent, layered, and just unpredictable enough to feel uncanny.

The Fragile Balance of Control

This brings us to a deeper concern, what keeps us in control?

Right now, guardrails, prompt boundaries, and supervised fine-tuning keep machines aligned. But like evolution itself, AI also doesn’t move in straight lines. One unexpected update, one seemingly minor architectural change, and the system can behave in ways no one predicted.

It’s not about fearing a rogue AI. It’s about recognizing that intelligence, natural or artificial, evolves by building on complexity, not always by design.

History has shown us that emergence is often indifferent to intent. Life wasn’t planned. It happened. And intelligence, once sparked, tends to find its own path.

Why We Must Still Build

Despite the risks, we must continue. Not blindly, but boldly.

Oparin didn’t create life. Miller and Urey didn’t create cells. But their work laid the groundwork for understanding how life could arise. Similarly, we may never “create” consciousness, but by building intelligent systems, we come closer to understanding our own.

Every act of creation is also an act of exploration. And exploration is what brought us from cave paintings to quantum computers.

Fear must not paralyze us. Progress isn’t always comfortable, but it’s always revealing. And while the future of AI may be uncertain, our curiosity should remain unwavering.

Final Thought: Standing on the Edge of Something New

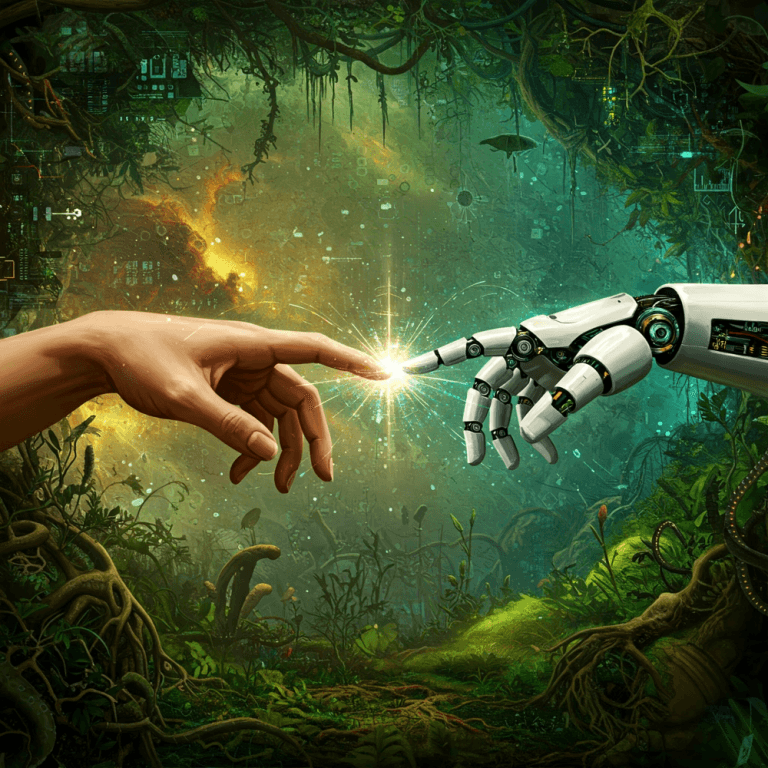

AI hasn’t birthed consciousness. But it has shown that the building blocks of thought can be modeled and perhaps, that’s the real milestone.

We are not racing to replace ourselves.

We are trying to understand what it really means to be human, by carefully building something that thinks almost like us.

We stand where science stood a century ago on the edge of something we don’t fully understand, the emergence of Life, guided not by certainty, but by the promise that some mysteries are worth walking into. And today in 2025, we are scratching the same surface with a different question, consciousness and the awareness of “self” in the digital realm.

And for me, this journey isn’t about answers. It’s about questions that are genuine, open-ended, and human.

We are not here to simulate life, we are here to understand it. And perhaps, in the mirror of a machine, we will finally see ourselves.

Total Users : 114

Total Users : 114